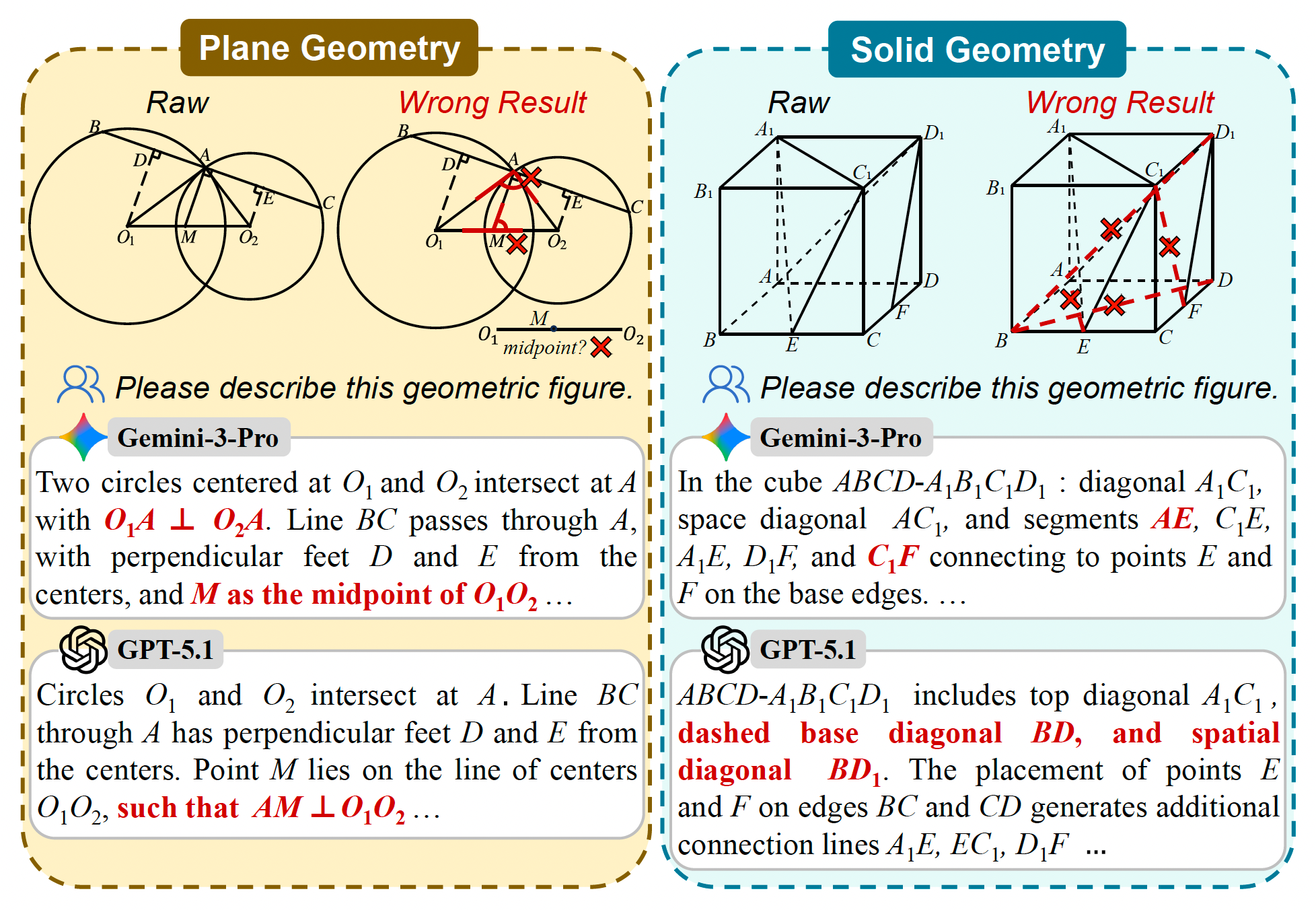

Figure 1. Hallucinations in geometric parsing by SOTA MLLMs. Even strong closed-source models still struggle to correctly parse slightly complex plane geometry and simple solid geometry.

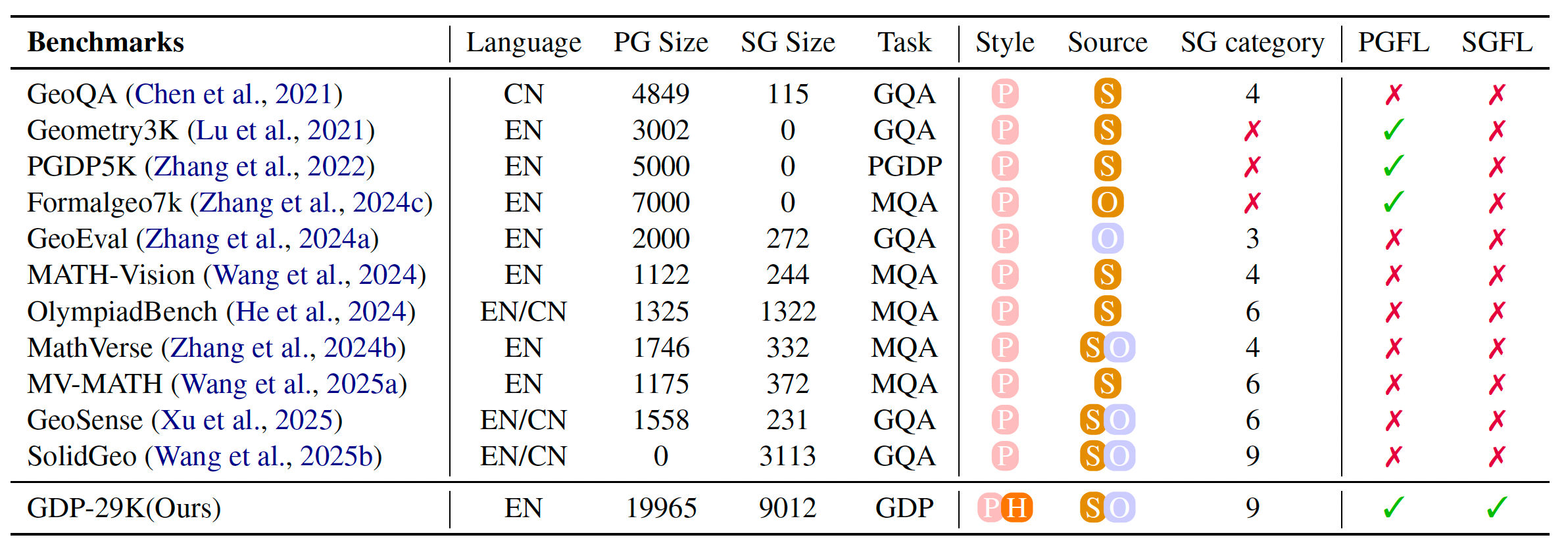

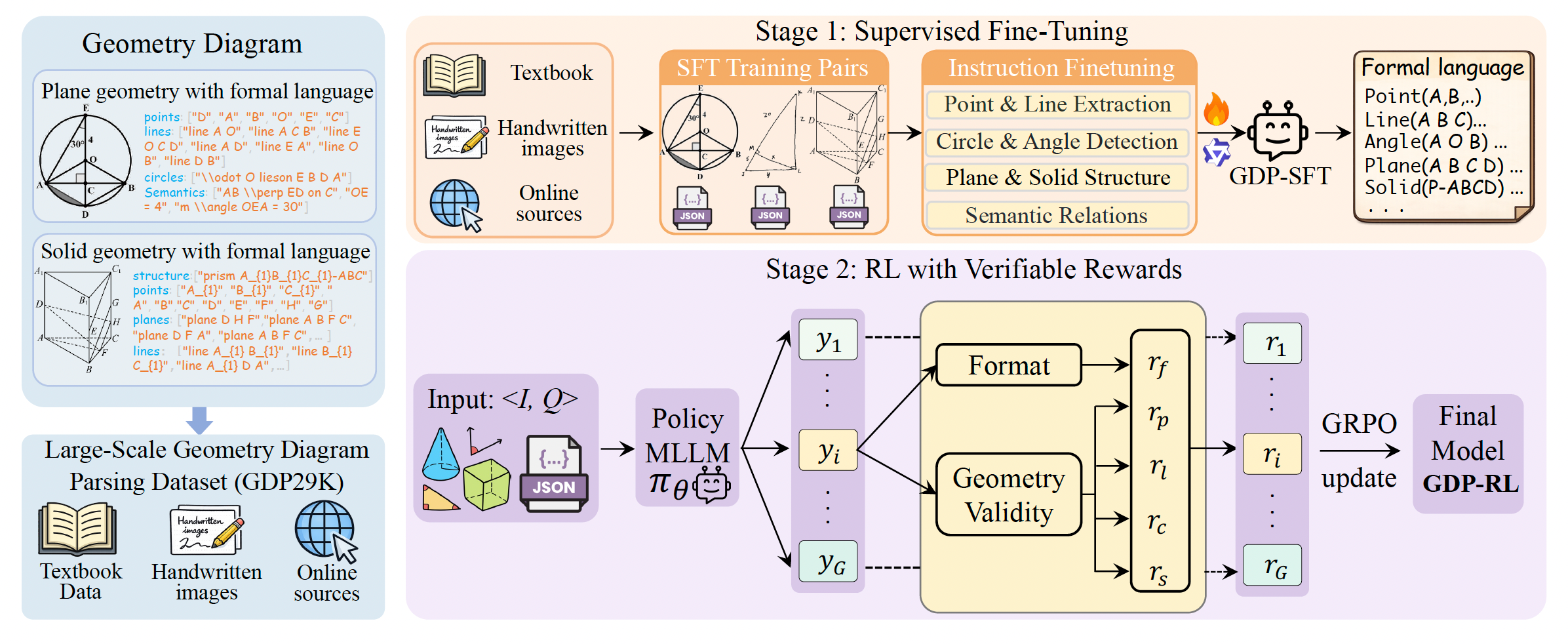

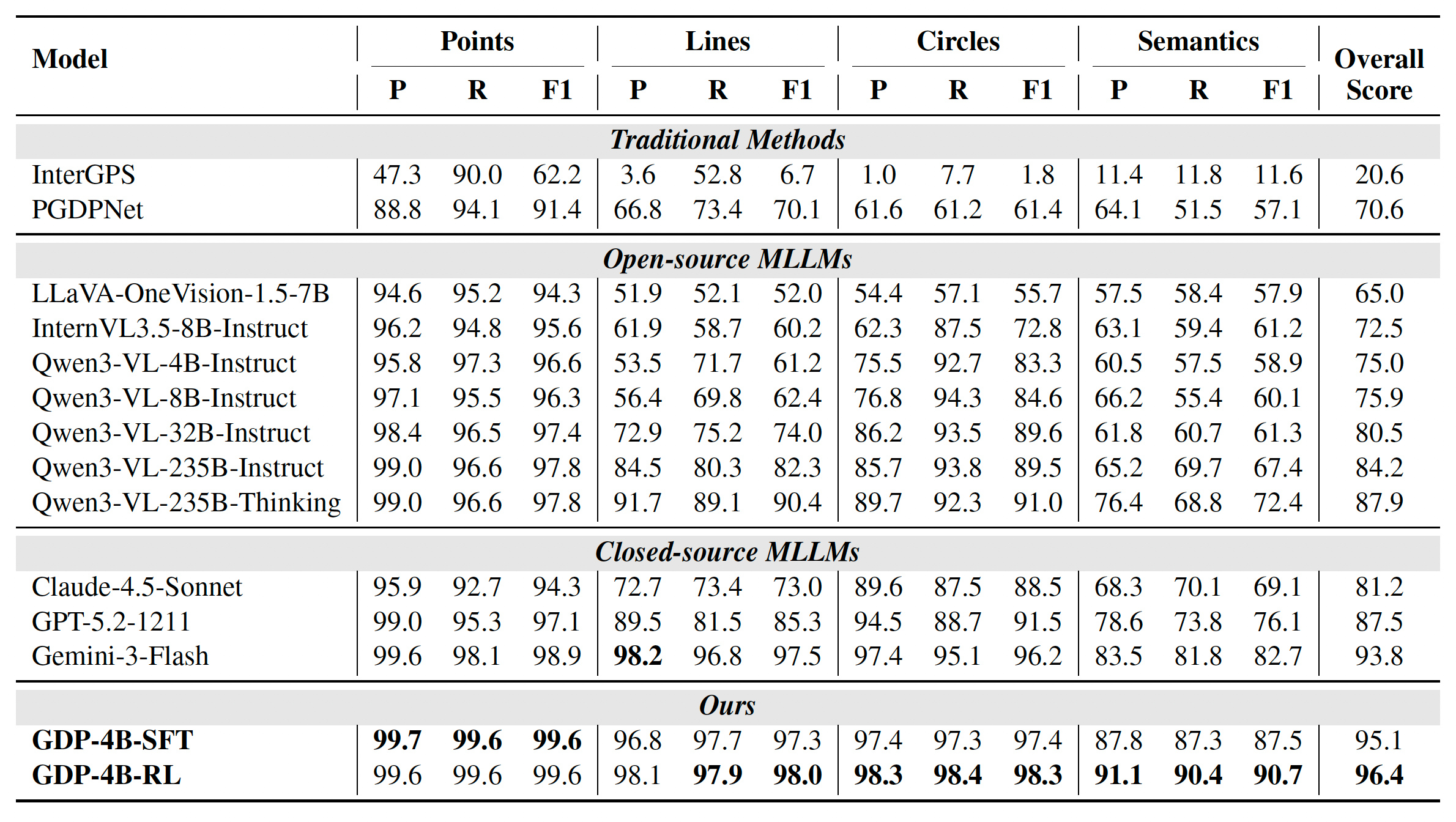

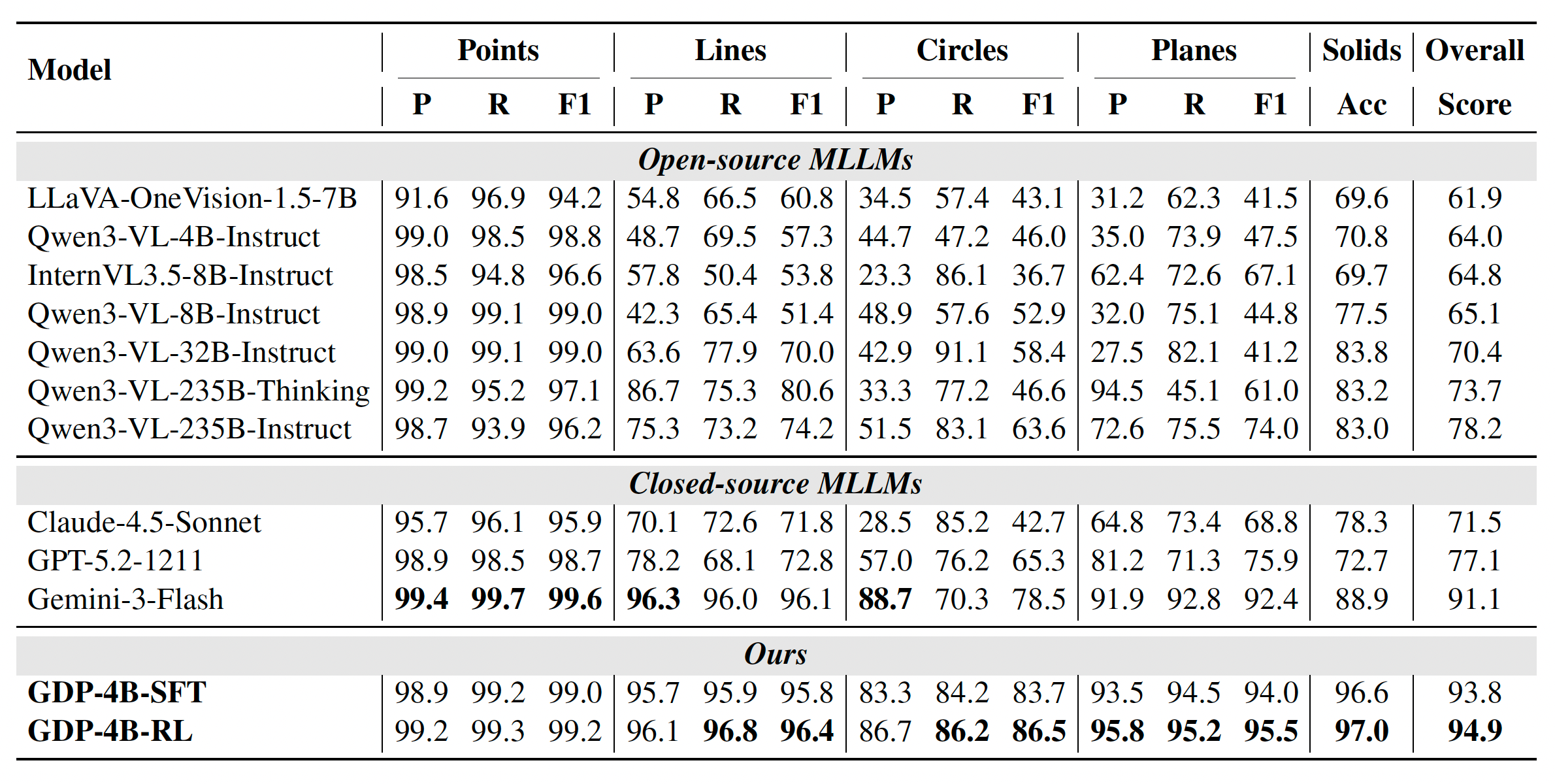

Multimodal Large Language Models (MLLMs) have made impressive progress, but they still struggle with geometric reasoning because fine-grained perception of geometric primitives and relations remains unreliable. Geoparsing addresses this bottleneck by introducing a unified formal language that covers both plane and solid geometry, together with GDP-29K, a large-scale dataset containing 20K plane and 9K solid geometry diagrams collected from diverse real-world sources. The training pipeline combines supervised fine-tuning with reinforcement learning via verifiable rewards so that generated parses satisfy both syntactic correctness and geometric consistency.

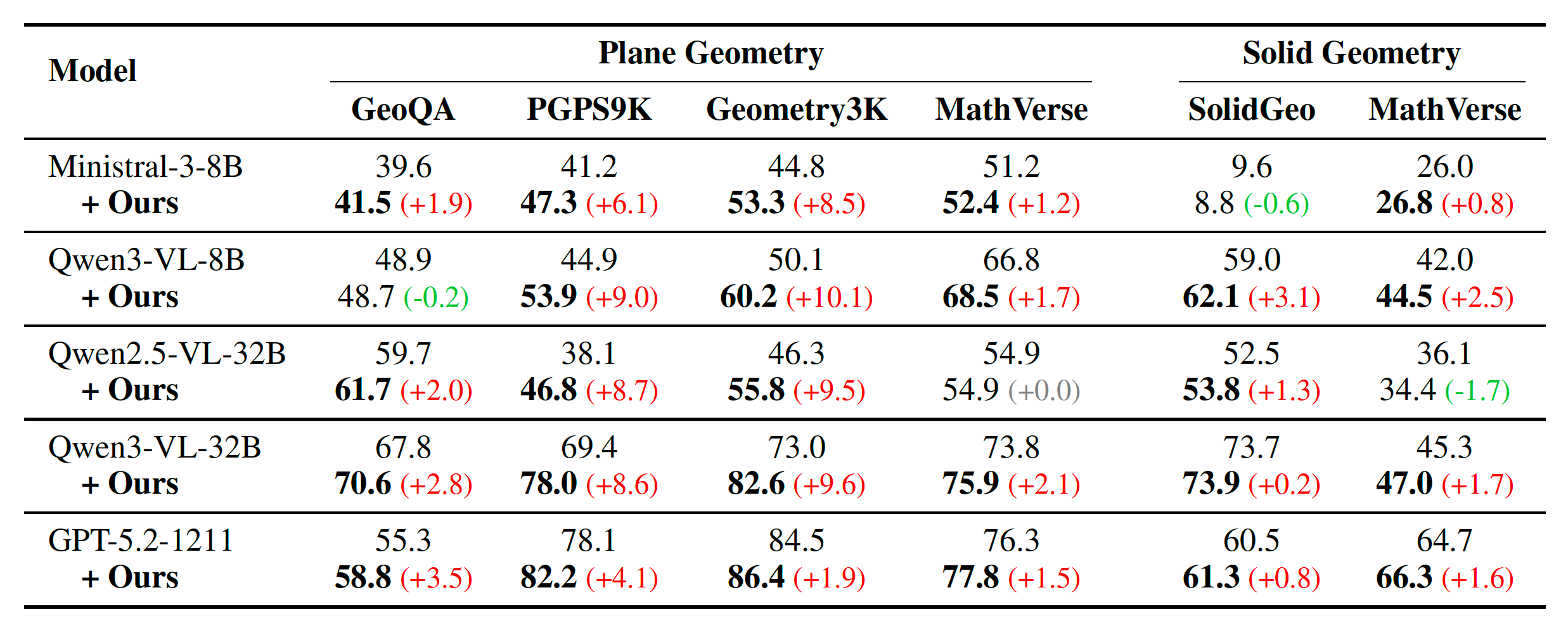

Beyond diagram parsing itself, the paper shows that these formal descriptions act as an effective cognitive scaffold for downstream reasoning, consistently improving multiple MLLMs on geometry benchmarks.

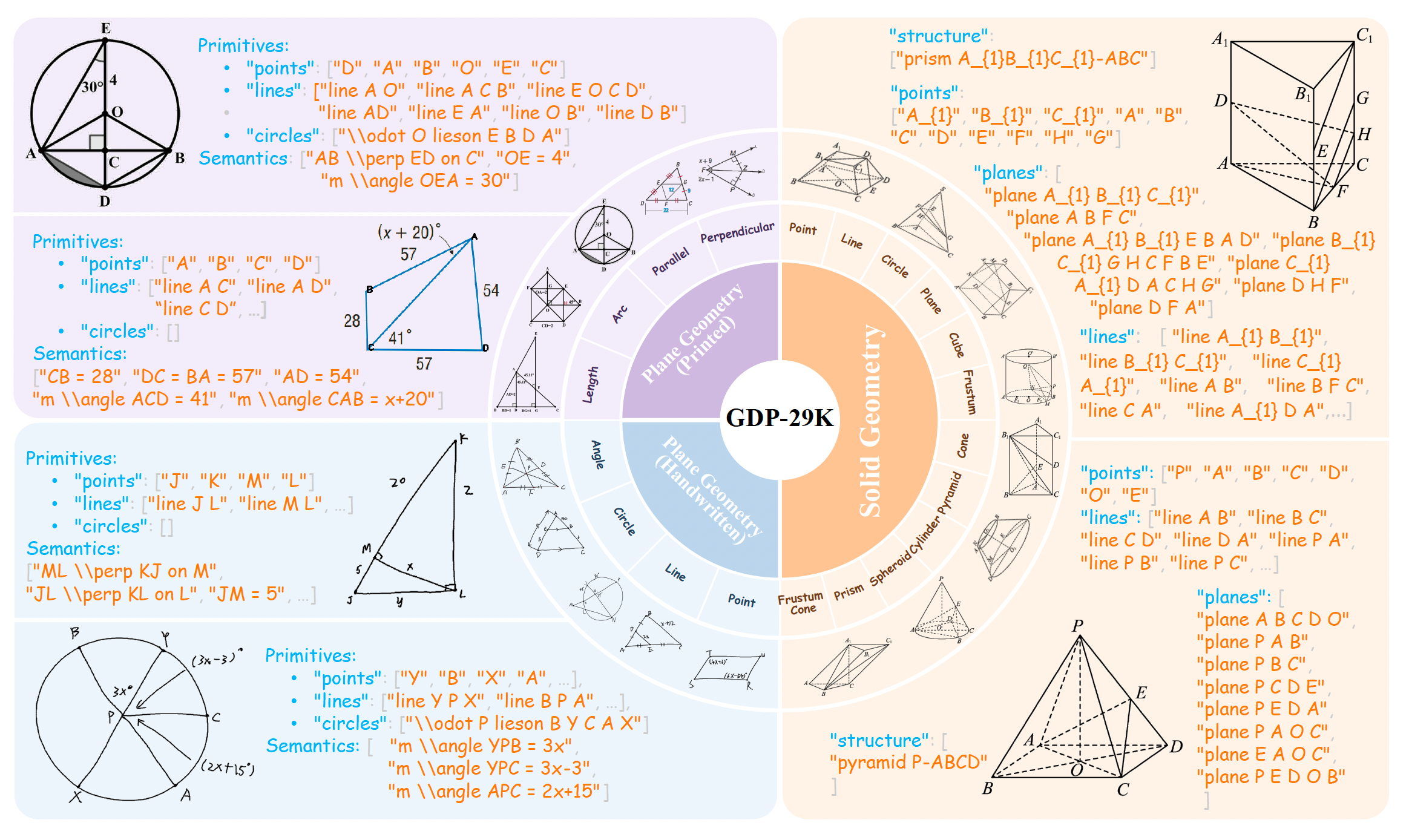

One representation covers plane geometry and solid geometry, from basic primitives to higher-order structures such as planes, prisms, pyramids, cones, cylinders, and frustums.

GDP-29K contains 28,882 labeled samples, including printed and handwritten plane geometry as well as the first large-scale solid geometry parsing subset.

The parser is first aligned with supervised fine-tuning and then refined using verifiable rewards that explicitly enforce format compliance and geometric validity.

GDP-29K is a large-scale benchmark for geometry diagram parsing, consisting of 28,882 annotated diagrams, including 19,965 plane geometry samples and 8,917 solid geometry samples. Beyond scale, the dataset emphasizes diversity in both visual style and geometric structure, with 5,516 handwritten diagrams included to better reflect realistic educational scenarios.

The dataset is built to unify plane and solid geometry under a shared formal language. Each sample is paired with structured annotations that capture geometric primitives and semantic constraints, enabling precise parsing and providing strong support for downstream multimodal geometry reasoning. Compared with earlier datasets, this benchmark is designed not only for larger scale but also for broader structural coverage. The solid subset fills a long-standing gap by providing formal annotations for 3D structures and spatial relations.

The model takes a geometry diagram and an instruction, then predicts a formal description sequence. The training process has two stages.

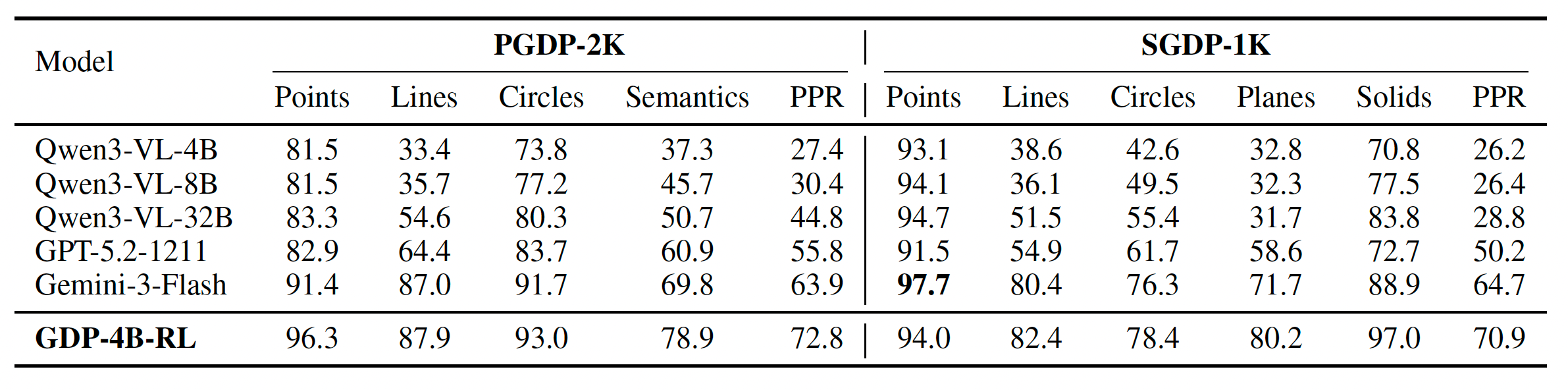

Category-level F1 does not guarantee that a full diagram is parsed correctly. The paper therefore also reports sample accuracy and perfect parsing rate, showing that Geoparsing is much more reliable at producing holistically correct formal descriptions.

In particular, GDP-4B-RL reaches 72.8% PPR on PGDP-2K and 70.9% PPR on SGDP-1K, which is stronger than general-purpose MLLMs.

The parsed formal language also helps downstream reasoning. For example, augmenting Qwen3-VL-8B with Geoparsing outputs improves accuracy by +10.1 on Geometry3K, +9.0 on PGPS9K, and +3.1 on SolidGeo.

This supports the paper's main claim: precise geometric perception is a critical foundation for reliable multimodal geometry reasoning.

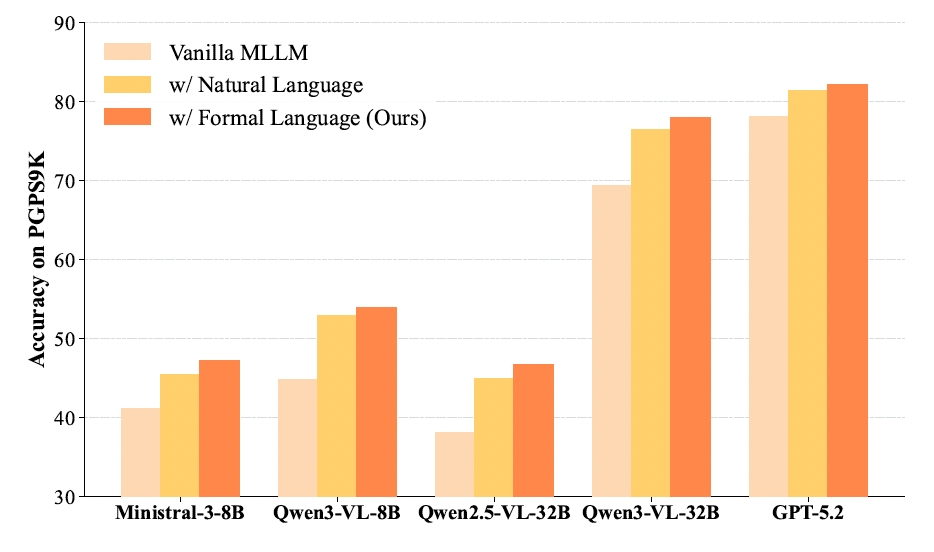

To isolate the impact of the parsed geometry description format on geometry reasoning, we compare our Formal Language (FL) against Natural Language (NL) on the PGPS9K benchmark. To ensure strict semantic equivalence, we employ Gemini-3-Pro to translate our parsed formal sequences into coherent NL descriptions, ensuring the two forms differ only in representation. As illustrated in Figure. 4, while both augmentation strategies improve over the vanilla baseline, FL consistently outperforms NL in assisting geometric reasoning across all five evaluated models. This superiority suggests that compact, symbolic representations provide higher information density.

These qualitative examples show how the additional parsed formal information corrects downstream reasoning trajectories. In each case, the vanilla reasoning path makes a perceptual or structural mistake, while the parsing-augmented version recovers the correct answer.

@article{wang2026geoparsing,

title={Geoparsing: Diagram Parsing for Plane and Solid Geometry with a Unified Formal Language},

author={Wang, Peijie and Zhang, Ming-Liang and Cao, Jun and Deng, Chao and Ran, Dekang and Sun, Hongda and Bu, Pi and Zhang, Xuan and Wang, Yingyao and Song, Jun and Zheng, Bo and Yin, Fei and Liu, Cheng-Lin},

journal={https://arxiv.org/abs/2604.11600},

year={2026}

}